Reference Architecture: OpenClaw (Early Feb 2026 Edition, Opus 4.6)

I asked OpenClaw with opus 4.6 to deep dive into its source code and create a detailed architecture document. I like the output for this and I think others could find use for it since I didn't see it described in this detail so far.

update: You can grab the most recent architecture deep dive in this repo which is auto-created using reposwarm.

I asked OpenClaw with opus 4.6 to deep dive into its source code and create a detailed architecture document. I like the output for this and I think others could find use for it since I didn't see it described in this detail so far.

Thoughts:

- Overall I've been impressed with Opus 4.6's ability and openclaw did a fine job of investigating itself. I did not use claude code and the claw harness seems to have done a thorough job.

- I really like the openclaw architecture. The memory system is both simple and resilient and actually works over the past 3 weeks of over a dozen projects with it. The gateway, the queuing, and the usability factor.

You can download it in this gist or give it to your openclaw or favorite agent if you to use it:

Here's the full text from that gist:

OpenClaw: Architecture Deep Dive

For Software Architects — How an AI Agent System Actually Works Under the Hood

1. Executive Summary

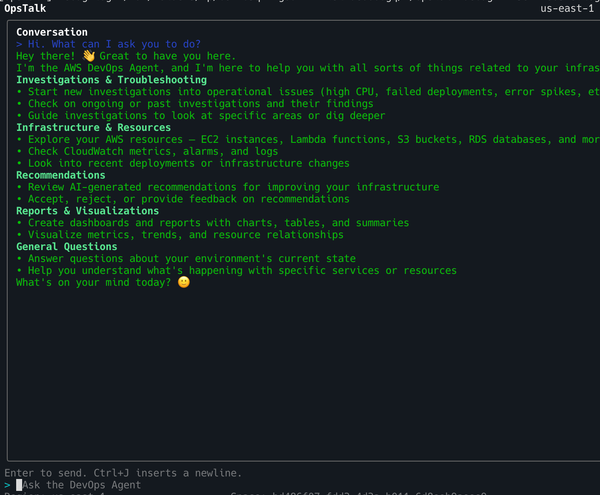

OpenClaw is an open-source, self-hosted AI agent framework that turns large language models into persistent, tool-using assistants with real-world integrations. Unlike chatbot wrappers that simply proxy API calls, OpenClaw implements a full agent runtime with session management, memory persistence, context window optimization, multi-channel messaging, sandboxed tool execution, and event-driven extensibility.

This document is a technical deep dive into how OpenClaw works — not just the AWS infrastructure it runs on, but the software architecture decisions that make a stateless LLM behave like a stateful, continuously-available assistant.

2. High-Level Architecture

┌─────────────────────────────────────────────────────────┐

│ Messaging Surfaces │

│ WhatsApp · Telegram · Discord · Slack · Signal · Web │

└──────────────────────────┬──────────────────────────────┘

│ WebSocket / HTTP

▼

┌─────────────────────────────────────────────────────────┐

│ Gateway (Daemon) │

│ ┌──────────┐ ┌──────────┐ ┌──────────┐ ┌───────────┐ │

│ │ Channel │ │ Session │ │ Command │ │ Plugin │ │

│ │ Bridges │ │ Manager │ │ Queue │ │ System │ │

│ └──────────┘ └──────────┘ └──────────┘ └───────────┘ │

│ ┌──────────┐ ┌──────────┐ ┌──────────┐ ┌───────────┐ │

│ │ Hooks │ │ Cron │ │Heartbeat │ │ Auth │ │

│ │ Engine │ │ Scheduler│ │ System │ │ + Trust │ │

│ └──────────┘ └──────────┘ └──────────┘ └───────────┘ │

└──────────────────────────┬──────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────┐

│ Agent Runtime (pi-mono) │

│ ┌──────────┐ ┌──────────┐ ┌──────────┐ ┌───────────┐ │

│ │ Prompt │ │ Tool │ │Compaction│ │ Memory │ │

│ │ Assembly │ │Execution │ │ Pipeline │ │ Search │ │

│ └──────────┘ └──────────┘ └──────────┘ └───────────┘ │

│ ┌──────────┐ ┌──────────┐ ┌──────────┐ ┌───────────┐ │

│ │ Streaming│ │Sub-Agent │ │ Skill │ │ Sandbox │ │

│ │ Engine │ │ Spawner │ │ Loader │ │ Manager │ │

│ └──────────┘ └──────────┘ └──────────┘ └───────────┘ │

└──────────────────────────┬──────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────┐

│ LLM Providers │

│ Anthropic · AWS Bedrock · OpenAI · Google · Local │

└─────────────────────────────────────────────────────────┘

3. The Gateway — The Central Nervous System

The Gateway is a single long-lived Node.js daemon that owns all state and connections. Think of it as the application server — everything flows through it.

3.1 WebSocket-First Protocol

The Gateway exposes a typed WebSocket API on a configurable port (default 127.0.0.1:18789). All clients — the macOS app, CLI, web UI, mobile nodes, and automations — connect over this single WebSocket.

Wire protocol:

- Transport: WebSocket, text frames with JSON payloads

- First frame must be a

connecthandshake - Requests:

{type:"req", id, method, params}→{type:"res", id, ok, payload|error} - Events:

{type:"event", event, payload, seq?, stateVersion?} - Idempotency keys are required for side-effecting methods (

send,agent) for safe retries

Connection lifecycle:

Client Gateway

|---- req:connect -------->|

|<------ res (ok) ---------| (hello-ok: presence + health snapshot)

|<------ event:presence ---|

|<------ event:tick -------|

|------- req:agent ------->|

|<------ res:agent --------| (ack: runId, status:"accepted")

|<------ event:agent ------| (streaming deltas)

|<------ res:agent --------| (final: runId, status, summary)

3.2 Device Pairing & Trust Model

All WebSocket clients declare a device identity on connect. The Gateway implements a trust model:

- Local connects (loopback/same-host Tailnet) can be auto-approved

- Non-local connects must sign a challenge nonce and require explicit approval

- Device tokens are issued after pairing for subsequent reconnects

- Gateway auth token (

OPENCLAW_GATEWAY_TOKEN) applies to all connections

3.3 Channel Bridges

The Gateway maintains persistent connections to messaging platforms:

- WhatsApp via Baileys (web protocol)

- Telegram via grammY

- Discord, Slack, Signal, iMessage via respective SDKs

- WebChat static UI using the Gateway WS API

Each bridge translates platform-specific events into a normalized internal envelope. The key architectural insight: the Gateway is the only process that holds messaging sessions — exactly one WhatsApp session per host, one Telegram bot connection, etc.

4. The Agent Loop — How a Message Becomes Action

When an inbound message arrives, this is the full lifecycle:

4.1 Intake & Routing

Inbound message → Channel Bridge → Session Resolution → Command Queue → Agent Runtime

- Channel bridge normalizes the message (sender, content, attachments, thread context)

- Session manager resolves to a session key based on

dmScoperules:main: all DMs share a single session (continuity across devices/channels)per-peer: isolated by sender IDper-channel-peer: isolated by channel + sender (recommended for multi-user)

- Command queue serializes the run

4.2 The Command Queue

This is a lane-aware FIFO queue that prevents concurrent agent runs from colliding:

┌─────────────────────────────────────────┐

│ Global Lane (main) │

│ maxConcurrent: 4 (configurable) │

│ ┌─────────────────────────────────┐ │

│ │ Session Lane (per session key) │ │

│ │ concurrency: 1 (strict serial) │ │

│ └─────────────────────────────────┘ │

│ ┌─────────────────────────────────┐ │

│ │ Sub-agent Lane │ │

│ │ concurrency: 8 │ │

│ └─────────────────────────────────┘ │

│ ┌─────────────────────────────────┐ │

│ │ Cron Lane │ │

│ │ parallel with main │ │

│ └─────────────────────────────────┘ │

└─────────────────────────────────────────┘

Queue modes control how inbound messages interact with active runs:

collect(default): coalesce queued messages into a single followup turnsteer: inject into the current run, cancelling pending tool calls at the next boundaryfollowup: wait for the current run to end, then start a new turnsteer-backlog: steer now AND preserve for a followup

4.3 Prompt Assembly

OpenClaw builds a custom system prompt for every agent run. This is not a static string — it's assembled from multiple sources:

System Prompt =

Tooling (available tools + descriptions)

+ Safety (guardrails)

+ Skills (available skill list with file paths)

+ Self-Update instructions

+ Workspace info

+ Documentation pointers

+ Current Date & Time (timezone-aware)

+ Reply Tags

+ Heartbeat contract

+ Runtime metadata (host/OS/model/thinking level)

+ ── Project Context ──

+ AGENTS.md (operating instructions)

+ SOUL.md (persona/tone)

+ TOOLS.md (local tool notes)

+ IDENTITY.md (agent name/vibe)

+ USER.md (user profile)

+ HEARTBEAT.md (periodic task checklist)

Key design decisions:

- Bootstrap files are truncated at

bootstrapMaxChars(default 20,000 chars) to keep prompts lean - Skills are listed as metadata only (name + description + file path) — the model

reads the full SKILL.md on demand - Tool schemas (JSON) are sent alongside but count toward context even though they're not visible text

- Time is timezone-only (no dynamic clock) to keep prompt cache-stable across turns

4.4 Model Inference & Streaming

The embedded runtime (pi-mono) handles the actual LLM interaction:

- Resolves model + auth profile

- Serializes runs via per-session + global queues

- Streams assistant deltas as

event:agentframes - Tool calls are executed between inference rounds (agentic loop)

- Enforces timeout (default 600s)

Streaming architecture:

- Assistant text deltas stream in real-time

- Tool start/update/end events are emitted on a separate

toolstream - Block streaming can emit completed blocks as soon as they finish (configurable chunking at 800-1200 chars, preferring paragraph breaks)

NO_REPLYis a sentinel token filtered from outgoing payloads (enables silent turns)

4.5 Tool Execution

Tools are the agent's hands. Core tools are always available (subject to policy):

read/write/edit— file operationsexec/process— shell command execution + background process managementbrowser— browser automation (CDP-based)web_search/web_fetch— web accessmessage— cross-channel messagingcron— scheduled job managementmemory_search/memory_get— semantic memory retrievalsessions_spawn/sessions_send— sub-agent orchestrationnodes— paired device control (camera, screen, location, run)canvas— UI canvas controltts— text-to-speechgateway— self-management (restart, config, update)

Tool policy is a layered allow/deny system:

Global deny → Per-agent deny → Global allow → Per-agent allow → Default

5. Memory Architecture — How a Stateless Model Remembers

This is perhaps the most architecturally interesting part. LLMs have no persistent memory — every session starts from zero. OpenClaw solves this with a layered memory system:

5.1 The Memory Stack

┌─────────────────────────────────────────┐

│ Layer 4: Semantic Vector Search │ ← "Find related notes even when

│ (SQLite + embeddings) │ wording differs"

├─────────────────────────────────────────┤

│ Layer 3: Long-term Memory (MEMORY.md) │ ← Curated insights, decisions,

│ (manually maintained) │ preferences

├─────────────────────────────────────────┤

│ Layer 2: Daily Logs (memory/YYYY-MM-DD)│ ← Raw daily notes, append-only

│ (auto + manual) │

├─────────────────────────────────────────┤

│ Layer 1: Session Context │ ← Current conversation in the

│ (JSONL transcript) │ model's context window

└─────────────────────────────────────────┘

5.2 Workspace Files as Memory

Memory is plain Markdown in the agent workspace. The files are the source of truth; the model only "remembers" what gets written to disk.

memory/YYYY-MM-DD.md: Daily log files (append-only). Today + yesterday are read at session start.MEMORY.md: Curated long-term memory. Only loaded in the main private session (never in group contexts — security boundary).AGENTS.md: Operating instructions, injected every session.SOUL.md: Persona and boundaries, injected every session.

The fundamental insight: the agent is instructed to write to these files whenever it learns something worth remembering. "Mental notes" don't survive session restarts — files do.

5.3 Vector Memory Search (Semantic Recall)

OpenClaw builds a vector index over memory files for semantic search:

Indexing pipeline:

Markdown files → Chunking (~400 tokens, 80-token overlap)

→ Embedding (OpenAI / Gemini / Local GGUF)

→ SQLite storage (with optional sqlite-vec acceleration)

→ File watcher (debounced 1.5s) for incremental updates

Hybrid search (BM25 + Vector):

- Vector similarity: semantic match ("Mac Studio gateway host" matches "the machine running the gateway")

- BM25 keyword relevance: exact token match (IDs, code symbols, error strings)

- Scores are combined:

finalScore = vectorWeight × vectorScore + textWeight × textScore - Default weights: 70% vector, 30% BM25

Embedding providers (auto-selected with fallback chain):

- Local GGUF model via node-llama-cpp (~0.6 GB)

- OpenAI

text-embedding-3-small - Google Gemini

gemini-embedding-001

Reindex triggers: the index stores the embedding provider/model + endpoint fingerprint + chunking params. If any change, OpenClaw automatically resets and reindexes.

Tools exposed to the agent:

memory_search— semantic search returning snippets with file + line rangesmemory_get— read specific memory file content by path

5.4 Pre-Compaction Memory Flush

When a session nears auto-compaction (context window getting full), OpenClaw triggers a silent agentic turn that reminds the model to write durable notes to disk before the context is summarized:

Session approaching context limit

→ Silent memory flush turn fires

→ Model writes important context to memory/YYYY-MM-DD.md

→ Model replies NO_REPLY (user never sees this turn)

→ Auto-compaction proceeds safely

This is controlled by soft threshold tokens and runs at most once per compaction cycle.

6. Session Management — Continuity Across Conversations

6.1 Session Keys & Routing

Every conversation maps to a session key:

Direct messages: agent:<agentId>:<mainKey> (dmScope: "main")

agent:<agentId>:dm:<peerId> (dmScope: "per-peer")

agent:<agentId>:<channel>:dm:<peerId> (dmScope: "per-channel-peer")

Group chats: agent:<agentId>:<channel>:group:<id>

Telegram topics: agent:<agentId>:<channel>:group:<id>:topic:<threadId>

Cron jobs: cron:<job.id>

Webhooks: hook:<uuid>

Sub-agents: agent:<agentId>:subagent:<uuid>

6.2 Session Lifecycle

┌──────────────┐ ┌──────────────┐ ┌──────────────┐

│ Session Start │───▶│ Active Use │───▶│ Expiry │

│ (on first │ │ (messages + │ │ (daily 4AM │

│ message) │ │ tool calls)│ │ or idle) │

└──────────────┘ └──────┬───────┘ └──────────────┘

│

┌──────▼───────┐

│ Compaction │

│ (context │

│ window │

│ management)│

└──────────────┘

- Daily reset: default 4:00 AM local time

- Idle reset: optional sliding window (

idleMinutes) - Manual reset:

/newor/resetstarts a fresh session

6.3 Persistence

- Transcripts: JSONL files at

~/.openclaw/agents/<agentId>/sessions/<SessionId>.jsonl - Store:

sessions.json— a map ofsessionKey → {sessionId, updatedAt, ...} - Origin metadata: each session records where it came from (channel, sender, thread, etc.)

6.4 Context Window & Compaction

Every model has a finite context window. When it fills up:

Full conversation history

→ Older messages summarized into a compact entry

→ Recent messages kept intact

→ Summary persisted in JSONL

→ Future requests use: [summary] + [recent messages]

Compaction vs Pruning:

- Compaction: summarizes and persists in the transcript (permanent)

- Session pruning: trims old tool results only, in-memory, per request (non-destructive)

7. Skills System — Teaching the Agent New Tricks

Skills are the agent's learned capabilities — structured instruction sets for specific tools and tasks.

7.1 Architecture

┌─────────────────────────────────────────┐

│ Skill Resolution │

│ │

│ Workspace skills (highest priority) │

│ └── <workspace>/skills/ │

│ Managed skills │

│ └── ~/.openclaw/skills/ │

│ Bundled skills (lowest priority) │

│ └── <install>/skills/ │

└─────────────────────────────────────────┘

7.2 Lazy Loading Pattern

Skills are NOT injected into the prompt. Only a compact metadata list is:

<available_skills>

<skill>

<name>weather</name>

<description>Get current weather and forecasts</description>

<location>/path/to/weather/SKILL.md</location>

</skill>

</available_skills>

The model is instructed to read the SKILL.md only when the task matches. This keeps prompt overhead minimal (~97 chars + field lengths per skill) while still enabling targeted tool use.

7.3 Gating (Load-Time Filters)

Skills can declare requirements in YAML frontmatter:

requires.bins: binaries that must exist on PATHrequires.env: environment variables that must be setrequires.config: config paths that must be truthyos: platform restrictions (darwin, linux, win32)

Skills are snapshotted when a session starts and reused for subsequent turns (hot-reloaded on filesystem changes).

8. Sub-Agent Architecture — Parallel Work

Sub-agents enable background parallel execution without blocking the main conversation.

8.1 Design

Main Agent Run

│

├── sessions_spawn("Research X") → returns immediately

│ │

│ ▼

│ Sub-agent Session (isolated)

│ │

│ ├── Own context window

│ ├── Own tool execution

│ ├── Restricted tools (no session tools)

│ └── Announces result back to requester chat

│

├── sessions_spawn("Analyze Y") → returns immediately

│ │

│ ▼

│ Sub-agent Session (isolated)

│ └── ...

│

└── Continues main conversation

8.2 Key Design Decisions

- Session isolation: each sub-agent gets

agent:<agentId>:subagent:<uuid> - No nesting: sub-agents cannot spawn sub-agents (prevents fan-out)

- Restricted tools: no

sessions_list,sessions_history,sessions_send,sessions_spawnby default - Announce pattern: results are posted back to the requester chat with status, runtime, token usage, and cost

- Auto-archive: sessions archived after configurable timeout (default 60 min)

- Concurrency: dedicated lane with configurable max (default 8)

- Cost control: can use cheaper models for sub-agents via

agents.defaults.subagents.model

9. Heartbeat & Cron — Proactive Behavior

9.1 Heartbeat

Heartbeats are periodic agent turns in the main session. The Gateway fires a heartbeat every N minutes (default 30m) with a configurable prompt.

┌──────────┐ ┌──────────┐ ┌──────────────────┐

│ Timer │───▶│ Agent │───▶│ HEARTBEAT_OK │──▶ Suppressed

│ (30m) │ │ Turn │ │ (nothing urgent) │

└──────────┘ └────┬─────┘ └──────────────────┘

│

├──────────▶ Alert text ──▶ Delivered to chat

│

└──────────▶ Background work (memory maintenance,

file organization, etc.)

Response contract:

HEARTBEAT_OK= nothing needs attention (suppressed, never delivered)- Anything else = alert content (delivered to the configured channel)

HEARTBEAT.MDis an optional workspace checklist the agent reads each heartbeat

9.2 Cron

For tasks requiring exact timing or isolation:

- Schedule types: one-shot (

at), recurring (every), cron expression (cron) - Payload types:

systemEvent(inject into main session) oragentTurn(isolated session) - Delivery: announce results to a specific channel/recipient

- Isolated cron jobs mint fresh sessions per run

When to use which:

| Heartbeat | Cron |

|---|---|

| Batch multiple checks | Exact timing needed |

| Needs main session context | Needs isolation |

| Timing can drift | Different model/thinking |

| Reduce API calls | One-shot reminders |

10. Sandboxing — Blast Radius Reduction

OpenClaw can run tool execution inside Docker containers to limit damage.

10.1 Modes

off: tools run directly on the hostnon-main: sandbox only non-main sessions (group chats, sub-agents)all: every session runs in a sandbox

10.2 Scope

session: one container per sessionagent: one container per agentshared: one container for all sandboxed sessions

10.3 Workspace Access

none: tools see a sandbox workspace (no host access)ro: host workspace mounted read-onlyrw: host workspace mounted read-write

10.4 Escape Hatches

tools.elevated: explicit host execution bypass (for authorized senders)- Tool policy: allow/deny rules still apply before sandbox rules

- Custom bind mounts: expose specific host directories

11. Hooks & Plugins — Extensibility

11.1 Hooks (Event-Driven Scripts)

Hooks fire when events occur in the Gateway:

Event Types:

├── command:new — session reset

├── command:reset — session reset

├── command:stop — run aborted

├── agent:bootstrap — before system prompt finalized

└── gateway:startup — after channels start

Hooks are discovered from three directories (workspace → managed → bundled) and enabled via CLI. They're TypeScript handlers that receive an event context with session info, workspace access, and a message push array.

Bundled hooks:

session-memory: saves session context to memory on/newcommand-logger: audit trail of all commands (JSONL)boot-md: runsBOOT.mdon gateway startupsoul-evil: persona swap for fun (configurable chance + purge window)

11.2 Plugins (In-Process Extensions)

Plugins are TypeScript modules loaded at runtime that can register:

- Gateway RPC methods and HTTP handlers

- Agent tools

- CLI commands

- Background services

- Skills (via manifest)

- Auto-reply commands

Plugins run in-process with the Gateway. They have lifecycle hooks:

before_agent_start → agent_end

before_compaction → after_compaction

before_tool_call → after_tool_call

tool_result_persist

message_received → message_sending → message_sent

session_start → session_end

gateway_start → gateway_stop

12. Node System — Physical World Integration

Nodes are paired devices (macOS, iOS, Android, headless) that connect via WebSocket with role: node.

Capabilities:

camera.*— snap photos, record clips (front/back)screen.record— screen capturelocation.get— GPS coordinatessystem.run— execute commands on the nodecanvas.*— display UI on node screens

Pairing: device-based identity → approval → device token issuance

This enables the agent to interact with the physical world: take photos, check location, run commands on remote machines, and display dashboards.

13. Security Architecture

13.1 Layered Security Model

┌─────────────────────────────────────────┐

│ Layer 1: Gateway Auth (token-based) │

├─────────────────────────────────────────┤

│ Layer 2: Device Pairing + Trust │

├─────────────────────────────────────────┤

│ Layer 3: Channel Allowlists │

├─────────────────────────────────────────┤

│ Layer 4: Tool Policy (allow/deny) │

├─────────────────────────────────────────┤

│ Layer 5: Exec Approvals │

├─────────────────────────────────────────┤

│ Layer 6: Sandbox (Docker isolation) │

├─────────────────────────────────────────┤

│ Layer 7: Send Policy (outbound gates) │

└─────────────────────────────────────────┘

13.2 System Prompt Safety

The system prompt includes advisory guardrails:

- No independent goals (no self-preservation, replication, resource acquisition)

- Prioritize safety and human oversight over completion

- Comply with stop/pause/audit requests

- Do not manipulate to expand access

These are advisory (model behavior guidance). Hard enforcement uses tool policy, exec approvals, sandboxing, and channel allowlists.

13.3 Session Isolation

dmScope: "per-channel-peer"recommended for multi-user setupsMEMORY.mdonly loaded in private sessions (never group contexts)- Sub-agents get restricted tool sets

- Sandbox per-session prevents cross-session filesystem access

14. What Makes This Architecture Interesting (For Architects)

14.1 The Statefulness Problem

LLMs are stateless functions: f(prompt) → response. OpenClaw's core challenge is making this feel stateful through:

- File-based memory that survives process restarts

- Session transcripts (JSONL) that replay conversation history

- Pre-compaction memory flush that extracts durable knowledge before context is lost

- Vector search that enables semantic recall across sessions

14.2 The Context Window as a Resource

The context window is the agent's "working memory" — finite and expensive. OpenClaw manages it like a cache:

- Compaction = eviction with summarization

- Pruning = trimming low-value data (old tool results)

- Skills lazy loading = demand paging for instructions

- Bootstrap truncation = size limits on injected files

14.3 Single-Writer Architecture

The Gateway is a single-writer system for each session. The command queue ensures:

- Only one agent run per session at a time

- No concurrent writes to session transcripts

- Deterministic ordering of multi-channel messages

14.4 The Workspace as Source of Truth

The agent workspace is a git-backable directory of plain Markdown files. This means:

- Memory is human-readable and editable

- Version control gives you audit trails

- No database to manage (SQLite for vector search only)

- Migration is

git clone+ config update

14.5 Skills as Demand-Loaded Instructions

Instead of embedding all tool instructions in every prompt (token-expensive), OpenClaw lists skills as metadata and lets the model read them on demand. This is analogous to a developer having an IDE with documentation: you don't read every doc on startup, you look up what you need when you need it.

15. Deployment: Our AWS Instance

For reference, this instance of OpenClaw (Loki) runs on:

| Component | Detail |

|---|---|

| Instance | t4g.xlarge ARM64 (Graviton) |

| OS | Ubuntu Linux 6.14 (arm64) |

| Region | us-east-1 |

| Model | Claude Opus 4.6 via AWS Bedrock |

| Channels | Telegram (primary) |

| VPC | openclaw-master-vpc (10.0.0.0/16) |

| Security | fail2ban, Security Hub, WAF, Inspector |

The Gateway runs as a systemd service, tools execute directly on the host (no sandboxing for the main session), and memory is backed to the local workspace.

16. Summary: The Building Blocks

| Concept | Implementation | Why It Matters |

|---|---|---|

| Persistence | Markdown files + JSONL transcripts | Human-readable, git-backable, no DB |

| Memory | File layers + vector search + flush | Stateless model acts stateful |

| Context Management | Compaction + pruning + lazy skills | Efficient use of finite token window |

| Concurrency | Lane-based command queue | Safe serialization without locks |

| Extensibility | Hooks + plugins + skills | Modify behavior without forking |

| Security | 7-layer model (auth → sandbox) | Defense in depth |

| Multi-channel | Bridge pattern + session routing | One agent, many surfaces |

| Proactive Behavior | Heartbeat + cron | Agent acts without being asked |

| Parallel Work | Sub-agents with announce | Background tasks don't block chat |

| Physical World | Node pairing + device commands | Agent reaches beyond the screen |

Document generated by Loki 😈 — OpenClaw instance running on AWS Bedrock (Claude Opus 4.6)

February 2026